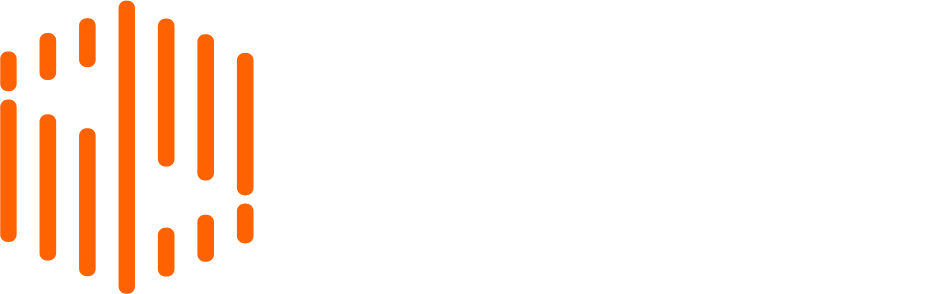

AI-Amplified Social Engineering: Deconstructing the ShinyHunters Rampage

AI-Amplified Social Engineering: Deconstructing the ShinyHunters Rampage TL;DR The cybersecurity landscape of May 2026 has been permanently altered by a relentless series of high-profile corporate breaches. The extortion group ShinyHunters orchestrated these devastating attacks. By deploying AI-Amplified Social Engineering, these threat actors successfully bypassed traditional multi-factor authentication. Crucially, they compromised massive organizations, including Carnival Corporation, Instructure Canvas, and Charter Communications. Instead of